Beyond Prompts: How to Make AI Actually Work in a Newsroom

Alexey Terekhov, media expert, Internews

To get the most out of AI, journalists need to learn a new role — that of a context engineer. Here’s the good news: this role builds on what journalists already do every day. You frame the assignment. You ask precise questions. You give your counterpart the background they need. The professional term for this is context engineering, and it’s what allows a team of five to punch well above its weight — handling workloads that used to require a staff three times the size.

If your newsroom already uses ChatGPT or another language model but the output keeps falling short — the writing sounds generic and unmistakably “AI”; a document summary drops a critical number; the suggested headlines feel more like ad copy than journalism — the model probably isn’t the problem. The problem is what you’re asking it to do, how much context you’re giving it, and whether you’re giving it any context at all.

From Prompt Engineering to Context Engineering

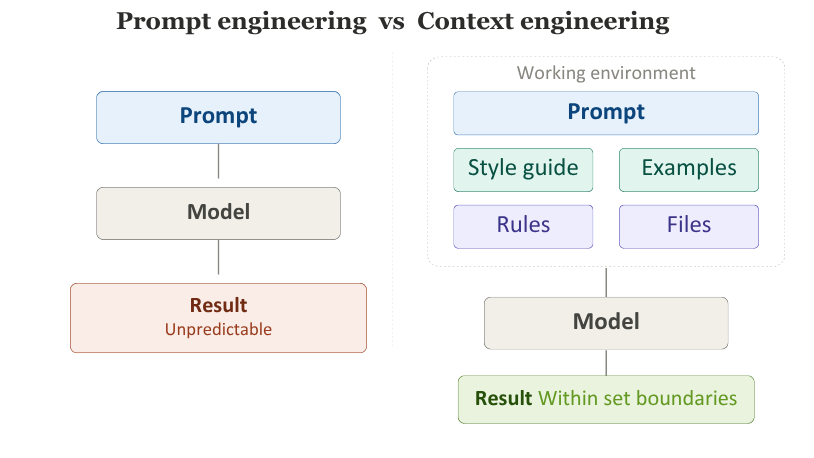

At its simplest, prompt engineering is the art of writing a good brief. You assign the model a role, describe the task, set the format, tone, length, constraints, and success criteria. Even this basic step produces noticeably better results than typing “write me something good.”

But the real payoff comes when a newsroom stops relying on scattered, one-off prompts and starts working through consistent frameworks. Instead of just the request itself, the model also receives everything that makes the request meaningful: story context, the publication’s house style, examples of strong writing, a rule against fabricating facts, guidelines on quoting, a list of trusted sources, and the expected output format.

That is context engineering.

The term gained traction in mid-2025, after Andrej Karpathy — a research scientist and founding member of OpenAI — and Tobi Lütke, CEO of Shopify, each called context engineering a core competency for anyone working with AI, almost at the same time.

The difference is straightforward. Prompt engineering is knowing how to ask an expert the right question. Context engineering is preparing a briefing folder for that expert before the meeting — complete with documents, references, guidelines, and examples. One approach relies on a clever question. The other designs an environment where the model is far more likely to deliver what you actually need.

Any newsroom can now assemble an entire team of virtual assistants: one to process interviews, another to summarise documents, a third to adapt stories for social platforms. But just like a real team, the quality of the output depends on the quality of the brief. A capable assistant with a vague brief will still produce mediocre work.

When you set up a custom chat, upload your style guide, add working documents, and define the rules — you are already doing context engineering. And when the system also pulls in past articles, relevant files, source lists, and constraints for each specific request, it becomes a genuinely powerful tool.

Where AI Actually Saves Time

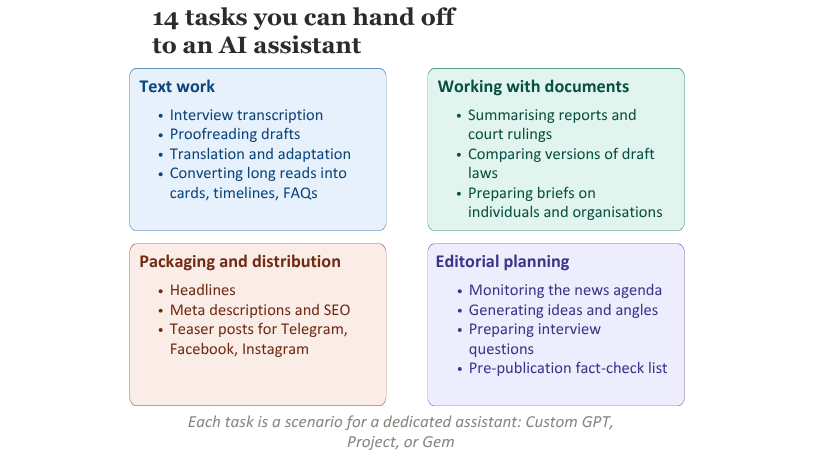

AI delivers the biggest returns not in writing final pieces, but in the routine production tasks that eat up hours every day.

Interview processing. Paired with a speech-to-text service, a model can quickly turn a raw transcript into a structured draft — pulling out key points, grouping responses by topic, and suggesting a narrative arc for the final piece.

Long documents. When a journalist needs to get up to speed on a government report, a draft law, or a court ruling, the model can surface the main findings, highlight key figures, flag the document’s limitations, and identify the questions it leaves unanswered. This doesn’t replace reading — it speeds up navigation through dense material.

SEO and platform packaging. Headlines, leads, meta descriptions, teaser copy for Facebook or Telegram — the model handles all of these reasonably well, as long as it knows your house rules: tone, length, acceptable vocabulary, and a firm no to clickbait.

Background briefs. A model can quickly sketch out a starting structure on a topic, a public figure, or an organisation — not as a publishable piece, but as a launchpad for proper journalistic verification. One rule is non-negotiable here: the AI must flag anything it’s uncertain about and never pass off guesswork as established fact.

Story discovery. This might be the most underrated use case. Models with web access can serve as an editorial radar — spotting emerging topics, sudden spikes in public discussion, signs of possible manipulation, and blind spots in the news agenda.

In every one of these cases, AI isn’t useful because it “can write.” It’s useful because it absorbs part of the production load and frees up time for the work that only a journalist can do.

What AI Does Not Replace

This is where the line has to be drawn clearly. AI does not replace editorial judgement. It does not replace a reporter’s instinct. It does not replace ethical responsibility. A model cannot understand a story the way a journalist does after following it for a month. It does not fact-check the way humans do. And it is never accountable for mistakes — the newsroom is.

So the conversation about context engineering is not just about productivity. It’s about discipline. It’s about integrating AI in a way that strengthens your journalists, rather than letting them mistake a rough draft for a finished story.

Every AI-generated background brief needs to be verified. Every document summary needs to be checked against the original. Every model-suggested headline must pass editorial review. Skip these steps, and instead of saving time you simply add a new layer of errors.

How This Works in ChatGPT, Claude, and Gemini

Each model has its own strengths. ChatGPT is a good fit when the newsroom wants a library of specialised assistants. Custom GPTs let you assign a role, write instructions, upload reference files, and connect external tools — all without a technical team.

Claude excels at working with long documents and analytical tasks. Its Projects feature is ideal when you need to keep a style guide, a publication archive, or a body of research material readily available.

Gemini is the most interesting option for newsrooms that already run on Google Docs, Drive, and Gmail. Its edge is tight integration with the working environment, plus strong search capabilities.

In practice, many teams find it makes sense to use two or even three models — each for a different purpose. One for daily routine, another for deep analysis, a third for integration with the rest of the workflow.

How to Start

Pick one task that costs your newsroom the most time. It could be interview processing, document analysis, SEO packaging, or news monitoring.

Step one: define a working framework for that task — the model’s role, the expected output, constraints, trusted sources, quality criteria, and a rule that a human must always sign off on the result.

Say you start with interviews. A prompt might look like this:

“You are an editorial assistant. Here is an interview transcript. Organise it by topic and highlight key quotes word for word. Do not rephrase direct quotes. Do not add anything that isn’t in the recording. Deliver a draft of 800–1,000 words, divided into thematic sections.”

That’s prompt engineering — a well-crafted request. Test it in a regular chat window. If the output works, move to the next stage.

Now for context engineering. In ChatGPT, create a Custom GPT. Paste the prompt into the Instructions field and add a line: “When responding, refer to the files in the Knowledge section.” Then upload your style guide and a couple of examples of good interview write-ups into Knowledge.

In Claude, open a Project. Add the same files to Project Knowledge and write a note in Custom Instructions — for example, “Things we never do.”

In Gemini, create a Gem and link a Google Doc with your style guide directly from Drive.

Done. From now on, the model works within the right framework every time — no need to repeat the brief. The instructions are already baked in. This is no longer a one-off prompt. It’s a configured working environment. This is context engineering in practice.

Step two: repeat the process for the next task. Once interviews are running smoothly, build the same kind of environment for document summaries. Then for SEO packaging. Each new setup is one more assistant on your editorial team.

Step three: appoint one or two people in the newsroom to coordinate the process — collecting what works, sharing it with colleagues, and helping anyone who’s still finding their feet.

One task, one owner, a couple of weeks to set up. Then move on to the next. That’s how AI stops being a novelty and becomes part of how the newsroom actually works.

A Basic AI Kit for the Newsroom

Over time, the newsroom builds up five core elements.

A library of configured assistants — Custom GPTs, Projects, or Gems set up for key roles: interview processor, document analyst, SEO packager, background researcher, copy editor, translator.

An editorial style guide for AI — a short document that tells the model what tone to use, what to avoid, and what a good output looks like. Upload it to the Knowledge or Project Knowledge section of every assistant.

A verification protocol — clear rules about what must be checked by a human, what can be used as a draft, and who owns the final version.

An internal sandbox — a shared chat, channel, or document where the team logs what worked, what failed, and any new approaches, so that lessons don’t stay trapped in private conversations.

An AI coordinator — one or two people who help colleagues set up their assistants and weave them into daily routines.

None of this is exotic. It’s simply a new layer of editorial infrastructure.

In the years ahead, a newsroom’s competitive edge won’t come from having access to AI — everyone will have that. The edge will come from knowing how to give the model the right role, the right context, and the right boundaries. In short: context engineering.

The analysis is published as part of the project “Resilient press, informed voters: protecting elections in Moldova against disinformation” financially supported by the Embassy of the Netherlands in Moldova. The opinions expressed in the analysis belong to the authors and do not necessarily reflect the donor’s point of view.